The Week Everything Changed: Google's AI Music Empire, Suno's Peace Treaty, and What It Means for Indie Musicians

There are weeks where the AI music world moves an inch. And then there’s this week.

Between February 24th and 26th, 2026, three tectonic shifts happened almost simultaneously — the kind of events that individually would dominate headlines for a month, but instead piled on top of each other like the universe decided to speedrun the future of music all at once.

Let’s break down what happened, why it matters, and — most importantly — what it means if you’re a musician trying to navigate this increasingly wild landscape.

Google Just Built the Death Star of AI Creativity

On February 25th, Google announced updates to its artificial intelligence-powered image and video platform Flow, which will make it easier to create complex compositions and enhance content in the app. But “updates” is like calling the moon landing a “quick trip.” What Google actually did was absorb its separate experimental tools — Whisk and ImageFX — directly into Flow, creating a single unified workspace for all AI-powered visual creation.

Since Flow launched last year, users have created over 1.5 billion images and videos for creative projects ranging from films to music videos to product campaigns. That’s an absolutely staggering number. And now Google is saying: all those separate tools you’ve been bouncing between? They live under one roof.

Instead of wrangling a paragraph of adjectives hoping a model reads your mind, Flow now encourages a visual-first workflow. You generate candidate images with Nano Banana, refine them until they match your intent, then pass those frames into Veo 3.1 as “ingredients.” Veo then uses those images to anchor color, framing, subject detail, and mood — producing clips that stay faithful to your original vision.

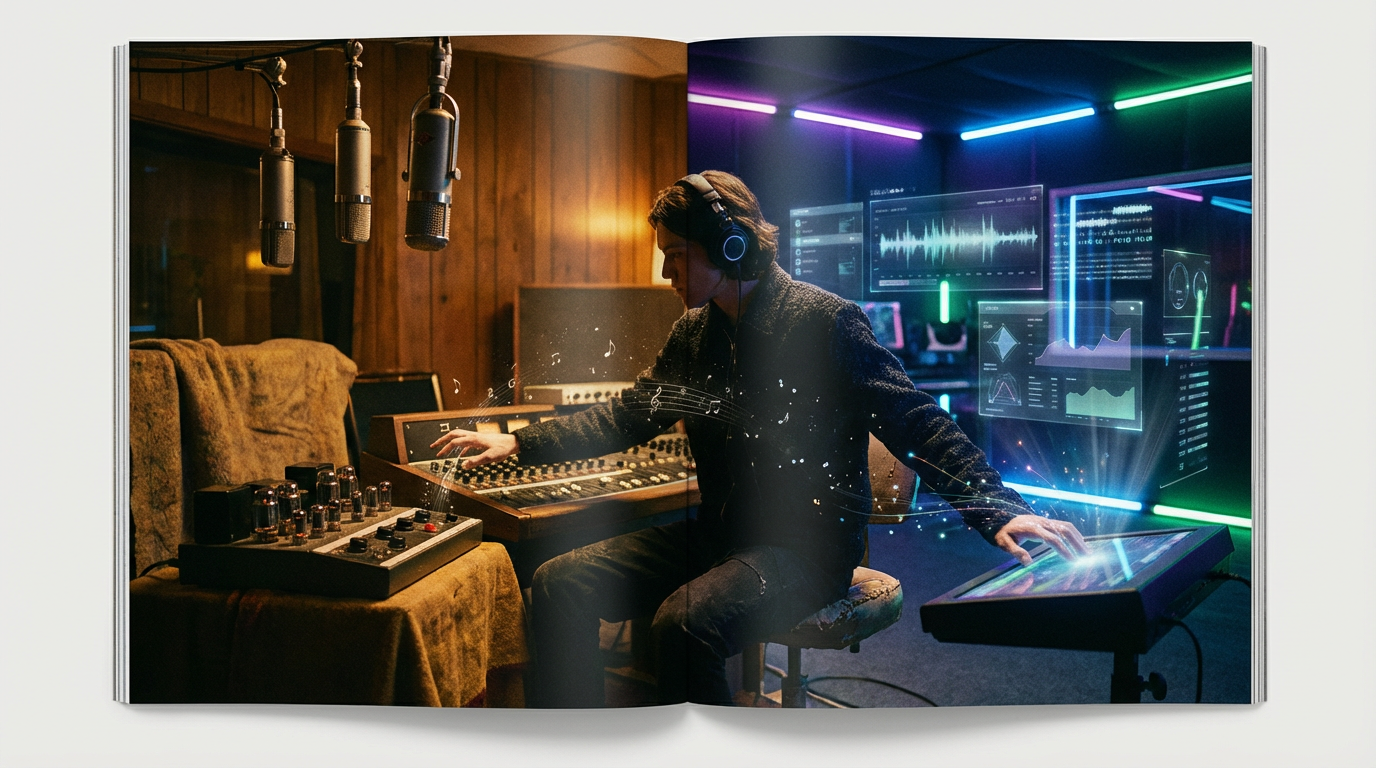

For music video creators, this is huge. Think about the current workflow: you brainstorm visual concepts, maybe create mood boards in one tool, generate images in another, animate them in a third, then edit in a fourth. Google just compressed that entire pipeline into a single browser tab.

Beginning in March, users will be able to transfer all of their Whisk and ImageFX projects and assets directly into Flow, which means all existing assets will be immediately available within the app.

The Real Signal: Google Wants to Own the Entire Creative Stack

Here’s what makes this move particularly significant for musicians. The day before the Flow announcement, AI-powered music-making platform ProducerAI joined the Google Labs family in the tech giant’s latest major leap into AI content creation.

Under Google, ProducerAI now runs on a preview version of Lyria 3 for music generation, Gemini for its chat interface, Google’s Nano Banana model for album art, and Veo for AI-powered music videos, with all outputs embedded with Google’s SynthID watermark for identifying AI-generated content.

Read that list again. Lyria 3 for music. Gemini for conversation. Nano Banana for visuals. Veo for video. SynthID for watermarking. That’s audio, text, image, video, and provenance tracking — all under one company, feeding into one ecosystem.

ProducerAI, formerly known as the startup Riffusion, gained traction for its ability to turn text prompts into short musical clips.

Initially, ProducerAI could only create very short clips. But now the platform enables users to create tracks up to three minutes long, which makes it a lot more useful for people looking to produce a full-length track instead of short loops to use on social media.

Wyclef Jean Enters the Chat (And That Matters More Than You Think)

Google didn’t just make product announcements — they rolled out a Grammy-winning co-sign. Google shared that three-time Grammy-winning rapper Wyclef Jean used the Lyria 3 model and Google’s Music AI Sandbox on his recent song “Back From Abu Dhabi.”

Jean recalls wanting to know what a flute would sound like in a track he already recorded, and being able to use Google’s tools to quickly add a flute sound to the mix. That’s not replacing the artist — that’s augmenting one. Wyclef already knows what he wants. The AI just gets him there faster.

And his take on the whole thing is worth hearing:

“What I want everybody to understand […] is you’re in the era where the human has to be the most creative,” Jean said. “There’s one thing that you have over the AI: a soul.”

The significance of having artists like Wyclef, Lecrae, and The Chainsmokers publicly endorsing these tools cannot be overstated. ProducerAI was founded by Seth Forsgren and Hayk Martiros, who originally launched the platform as Riffusion as an open-source hobby project that went viral in December 2022. In three years, it went from a hobby project to a Google acquisition with Grammy-winning endorsements. That trajectory tells you everything about where this market is headed.

Meanwhile, the Rebels Are Making Peace

As if the Google developments weren’t enough, today — February 26th — the Associated Press published a sweeping feature on the most dramatic reconciliation story in AI music: Suno and Udio are making peace with the record labels that sued them.

The process of training AI on beloved musicians of the past and present to produce synthetic approximations of their work has angered the music industry. Now, after their users have flooded the internet with millions of AI-generated songs, some of which have found themselves on streaming services like Spotify, the leaders of Suno and New York-based Udio are trying to negotiate with record labels to secure a foothold in an industry that shunned them.

The numbers are eye-popping. Fresh off a $250 million Series C fundraise and a valuation of $2.45 billion, Suno has quickly become the most well-known company for creating realistic AI-generated music today.

According to an investor pitch deck obtained by Billboard, Suno generates a Spotify catalog’s worth of music every two weeks.

Let that sink in. An entire Spotify catalog. Every. Two. Weeks.

The Settlement Scorecard

Here’s where things stand on the legal front:

Suno, now valued at $2.45 billion, last year struck a settlement with Warner.

Udio came to the table to start settling its disputes, beginning with a deal with Universal in late 2025. As part of that deal’s announcement, Udio vowed to pivot its service away from creating new songs with a simple prompt based on unlicensed training data to become a fully-licensed music remixing and fan engagement platform. Since then, Udio has also struck a similar deal with Warner Music Group.

Only one major label, Sony, has not settled with either startup as the lawsuits move forward in Boston and New York federal courts.

The Udio pivot is especially fascinating. They’re essentially reinventing themselves as a licensed AI platform where fans can officially remix and interact with their favorite artists’ catalogs. It’s the difference between piracy and iTunes — same technology, completely different business model.

Like ride-hailing company Lyft, which pitched itself as the friendly alternative to Uber’s aggressive expansion tactics more than a decade ago, Udio embraces its underdog status.

The Solomon Ray Problem

The AP story also highlighted something that should make every musician sit up and pay attention. A user created a fictional AI singer called Solomon Ray using a stack of AI tools:

He uses ChatGPT to write lyrics, Suno to generate songs and other AI tools to create cover art and promotional videos under the Solomon Ray name. And the results are apparently good enough to be scary: “(Solomon Ray) has an immaculate voice. He doesn’t get sick. You know, he doesn’t have to take leave, he doesn’t get injured and he can work faster than I can work.”

This is the tension at the heart of everything. The tools are powerful enough to create compelling music without any traditional musical skills. The question isn’t whether they can — they already are.

The Convergence Is the Story

What makes this particular week so remarkable isn’t any single announcement — it’s the convergence. Consider what’s happening simultaneously:

-

Google is consolidating its scattered AI creative tools into a unified pipeline that goes from prompt to music to image to video in one workspace

-

AI music startups are legitimizing through licensing deals with the same labels that tried to kill them

-

Grammy-winning artists are publicly co-signing AI as a creative partner, not a replacement

-

AI-assisted artists are climbing real charts — AI-assisted artists like Breaking Rust and Cain Walker are climbing the country charts

-

Even Apple is getting in on it — iOS 26.4 introduces a new AI-powered “Playlist Playground” feature that leverages Apple Intelligence, allowing users to generate a custom 25-song playlist from a text prompt.

This isn’t the “AI might disrupt music someday” story anymore. As Billboard put it, 2026 will be the year that AI truly makes a monumental impact on the music business — whether through the songwriting process, the determination of legal frameworks around copyright or establishing the role that AI-assisted artists will play in the industry.

What This Means for You (The Actual Musician)

Okay, enough industry analysis. Let’s get practical. If you’re a musician reading this in February 2026, here’s what this convergence week actually means for your creative life:

The Visual Bar Just Got Raised (Again)

With Google Flow unifying image and video generation into one seamless workspace, and ProducerAI connecting music creation to visual outputs, the expectation for what an independent artist can produce visually is about to skyrocket. Fans are going to increasingly expect every release to come with compelling visuals — not because it’s fair, but because it’s now possible.

Licensing Is Becoming the Moat

The Suno/Udio settlement story tells us that the future of AI music isn’t lawless — it’s licensed. The AI companies that survive will be the ones that play nice with rights holders. For musicians, this actually means your catalog has new potential revenue streams: AI platforms will need to license your work to let fans create remixes, mashups, and derivative content.

The “AI Collaborator” Narrative Is Winning

Wyclef’s endorsement of Google’s tools, framed as an augmentation of his existing creativity, is the blueprint for how artists will increasingly talk about AI. Not “AI made my song” but “I used AI to explore what a flute would sound like on my track.” The distinction matters — both culturally and legally.

Speed Is the New Superpower

When you can go from musical idea to three-minute track to album artwork to music video in a single workflow, the artists who thrive won’t be the ones with the biggest budgets. They’ll be the ones with the best ideas and the fastest creative iteration cycles. A bedroom producer with great taste and AI fluency can now ship more polished content than a label-backed artist did five years ago.

The Bottom Line

This week wasn’t just newsworthy — it was a demarcation line. Before this week, AI music and AI video were impressive but fragmented: separate tools, separate ecosystems, separate legal battles playing out in slow motion. After this week, the pieces are clicking into place. Google has its unified creative stack. The startups have their licensing deals. The artists have their endorsements.

The infrastructure for a new era of music creation is no longer theoretical. It’s shipping.

And if you’re a musician who’s been waiting to see how this all shakes out before diving in — the shaking is done. The pieces have landed. The only question left is how you use them.

Ready to turn your music into stunning visuals? While the big tech companies build their empires, OneMoreShot.ai is laser-focused on what indie musicians actually need: a fast, intuitive way to create professional music videos powered by AI. No sprawling creative suite to learn, no enterprise pricing to stomach — just your music and your vision, transformed into video in minutes. Give it a spin and see what this new era of music creation feels like firsthand.